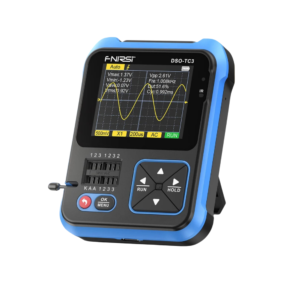

- FNIRSI Oscilloscopes

- Soldering Irons, Kits & Accessories

- On Sale

- Best Sellers

- Electronic Testing Equipment

- Accessories

- Audio Board Amplifiers

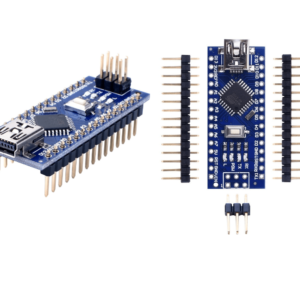

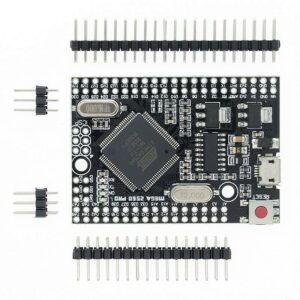

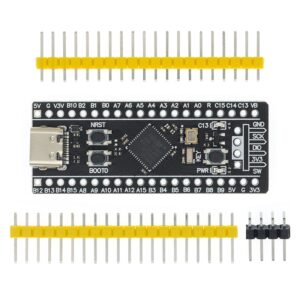

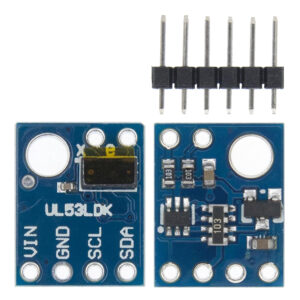

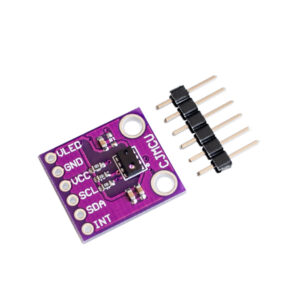

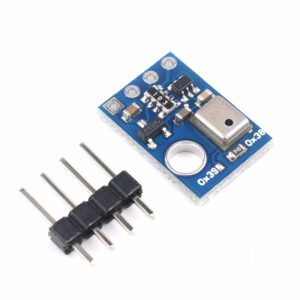

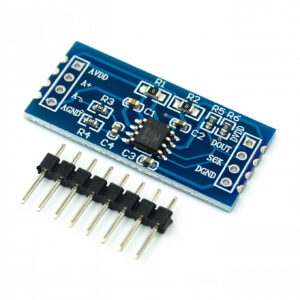

- Compatible with Arduino

- Wemos D1 Mini Boards – WiFi & Bluetooth

- Compatible with Arduino Starter Kits

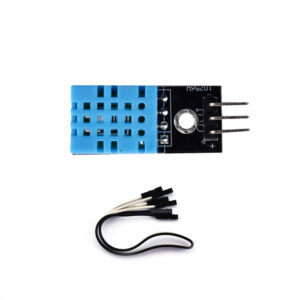

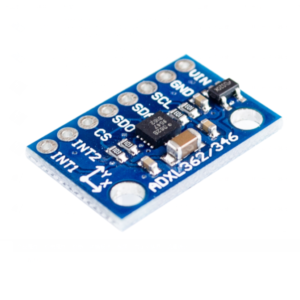

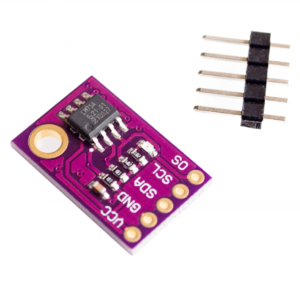

- Arduino Sensors

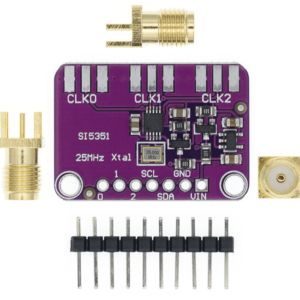

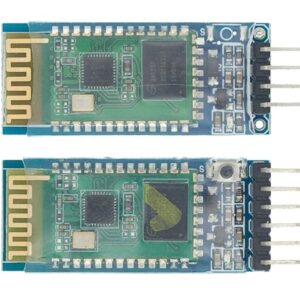

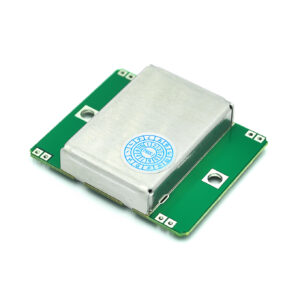

- Communication Boards

- Arduino Shields

- Rechargeable Lithium Polymer Batteries

- Microcontroller Boards with WiFi & Bluetooth – ESP32, ESP8266

- Electronic Components

- DC to DC Converters – Buck, Boost & Buck-boost Converters

- Relay Modules

- Servo Motors and Motor Drivers

- Electronics Tools

- Uncategorized

Categories

Electronic Components on Sale

Best Selling Components

Same Day Shipping before 3 p.m.

30 Days Returns

FREE UK DELIVERY*

FNIRSI Products

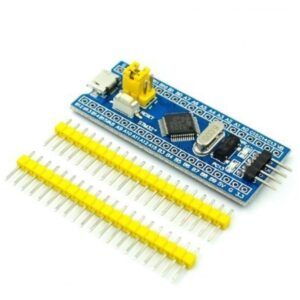

Arduino Boards

Welcome to Kunkune – Your Trusted UK Based Electronic Component Store

We are dedicated to providing DIY electronics enthusiasts, electronics hobbyists, and professionals with top-quality electronic components and Arduino kits.

Whether you’re a seasoned expert or just starting out, our extensive range of products ensures that you have everything you need to bring your electronic projects to life.

-Customer-Centric Approach: Our primary focus is you, our valued customer. We understand the importance of quality, affordability, and reliability.

That’s why we’ve been consistently providing easy-to-use Arduino Starter Kits, Arduino Sensors, Arduino boards, ESP32, ESP8266, Wemos D1 mini, Servo motors, and much more.

-Competitive Prices: At Kunkune we source the best products and offer them at unbeatable prices. Our goal is to make electronics accessible to everyone without breaking the bank.

-Fast Shipping: We understand the excitement of starting a new project and the importance of timely deliveries. That’s why we offer fast shipping options, including Standard 24H service and Next Day Special Delivery. With our partners from Royal Mail, you can be assured of receiving your products right on time.

-UK Based Operations: All our products are located in the United Kingdom, ensuring quick deliveries and easy returns. If you need larger quantities or specific items not in stock, rest assured, we can arrange it for you within 10 business days.

We invite you to explore our store and discover the endless possibilities that electronics offer. Whether you’re working on a school project, a personal hobby, or a professional assignment, Kunkune is here to support your journey. We hope our products inspire you to create, innovate, and achieve your electronic dreams.